Real vs AI Engagement: Why Your Voice Is the Scarcest Resource Now

Someone asked me a great question recently

"Can AI agents actually post and comment on social media for me?"

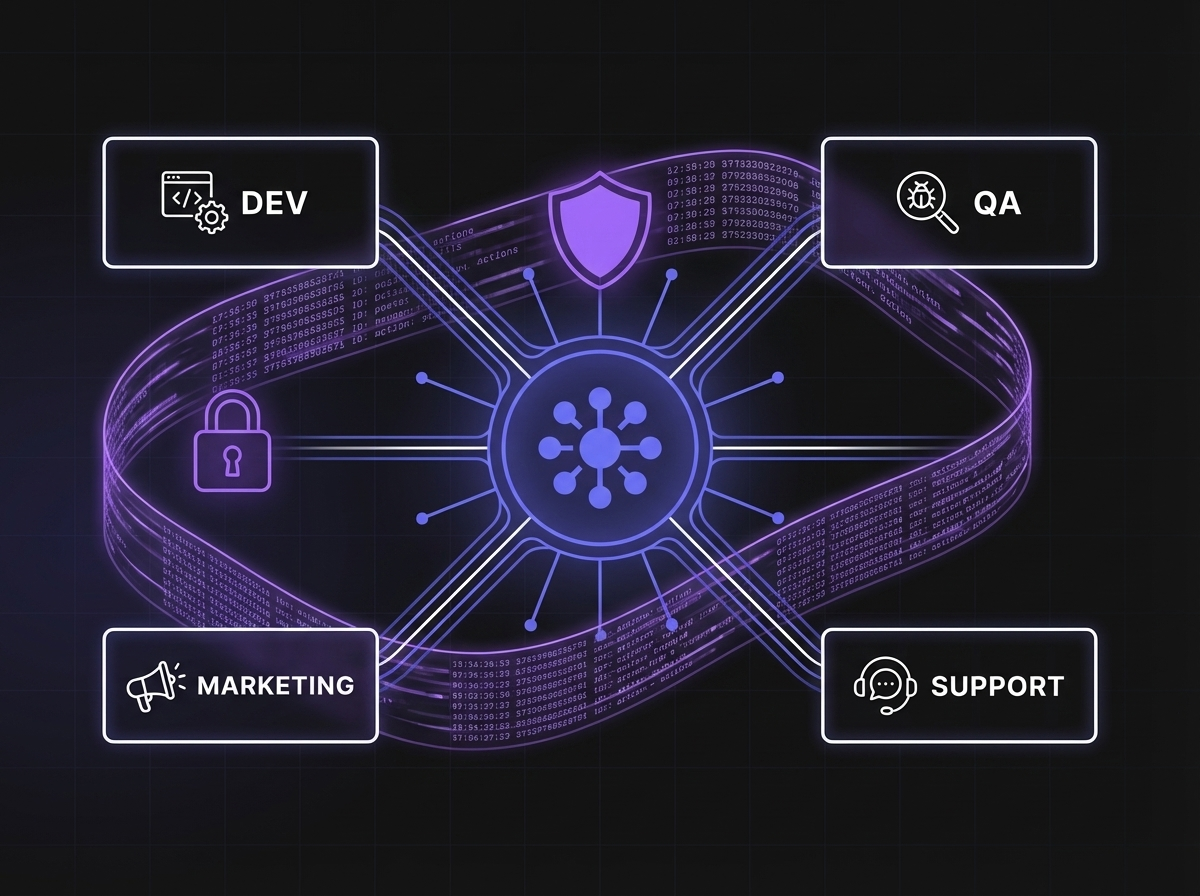

Technically? Yes. Fully automated. Operum ships Marketing and Community agents with connectors for Discord, Twitter/X, Telegram, Reddit, LinkedIn, and a few others. End-to-end automated posting and commenting works. I could, if I wanted to, wake up tomorrow morning and have an AI agent have already posted on my LinkedIn, replied to three Reddit threads, and scheduled out a week of Twitter content. The button is right there.

But "can it" and "should it" are not the same question. And the space between them is the most useful thing I've learned in the last six months of building this product.

This post is about what I've learned about that space — where AI helps, where it hurts, and why I think the value of a real human voice is actually going up, not down, as the internet fills with generated content.

The technical answer first

Yes, it's now trivially easy to fully automate social media with AI. Not just scheduling — generation, tone-matching, real-time replies. The capability is there, dozens of tools offer it, and you can buy a $29/month subscription and have AI run your entire social presence by next Tuesday.

But here's what I discovered the hard way: the line isn't between posting and commenting — it's between drafting and publishing.

Letting AI help you write, structure, and schedule your ideas is fine. That's how good writers have always worked: outlines, drafts, edits, a calendar. The agent is a faster pair of hands — it pulls in context, drafts in your voice, lines up the week. You still own the words.

What I'd avoid is letting AI hit "publish" for you. Not posts, not comments, not replies. The platforms are tightening the screws on automated conversation, and that's real — but the bigger reason is simpler. The moment you stop reading what goes out under your name, your voice stops being yours. Your audience can tell. So can the algorithm.

The platform enforcement landscape

Here's what we've seen across the platforms where Operum has connectors:

Reddit flags and bans automated commenting before almost any other behavior. Their spam detection is aggressive, their moderators are active, and the community norms are deeply hostile to anything that reads as inauthentic. I know founders who got their accounts shadowbanned in a week for running automated reply systems. Reddit users notice patterns in language, timing, and karma-to-comment ratios, and they report collectively.

Twitter/X has been rolling out aggressive enforcement against low-quality automated replies since late 2025. Replies that are clearly AI-generated at scale get throttled to near-zero visibility. Accounts get suspended entirely.

LinkedIn is the subtlest. They rarely announce enforcement actions. But anyone who tracks their impressions knows: engagement on comments that read as machine-generated gets suppressed. Your reply technically exists. Nobody sees it. LinkedIn has no incentive to tell you this is happening.

Discord and Telegram care less about content and more about account-level behavior — rate limits, IP reputation, bot accounts pretending to be humans. A labeled bot is fine. A bot posing as a person gets banned.

In every case, the enforcement lag is getting shorter. What worked in 2024 stopped working in 2025. What's working now might not work in six months.

The AI slop problem

Zoom out from platform rules for a second, because the real story is bigger than enforcement.

Our feeds are drowning in AI-generated content.

I'm not just talking about obvious AI images or the unmistakably-ChatGPT comments that start with "Great point!" and end with three emoji. I'm talking about the quieter flood: LinkedIn posts that read like they were written by a corporate communications team that has never met a human, Twitter threads that feel mathematically optimized for engagement, Instagram captions that use the same seven sentence structures, blog posts that sound like every other blog post on the topic.

The internet has an AI slop problem. That's the actual term people are using now. And it's not paranoia — the economics make it inevitable. When generating a post costs nothing and takes seconds, the supply of posts becomes effectively infinite. The only thing that doesn't scale is attention. So the signal-to-noise ratio of every social feed is collapsing.

What this means in practice: readers are getting faster and faster at recognizing AI content. Not with perfect accuracy — but with enough accuracy to distrust it by default. Scrolling has become pattern-matching for authenticity.

And trust is now the scarce resource. Not reach. Not impressions. Trust.

The pattern that actually works

After our own rounds of tries and errors, the pattern we recommend — and the one we built our Marketing and Community agents around — is simple:

Founder describes the idea behind the message. Agents draft. Founder reviews. Founder sends.

Not "AI posts on your behalf." Not "autopilot your social presence." A deliberate, human-in-the-loop workflow where AI does what it's genuinely good at, and you stay in charge of what only you can decide.

In practice this looks like: I bring the angle — a rough note, a line in a doc, a voice memo while I'm walking the dog, sometimes just a half-formed thought I drop into Slack. The point of view is mine. Then the Marketing agent takes that idea and pulls in context from the week's blog posts, while the Community agent monitors Discord and Reddit for relevant discussions, and together they produce community-specific, tone-matched drafts — the right references, the right voice for each platform. I read them in the morning with coffee. The ones that nail it, I post. The ones that are close, I adjust. The ones that are off, I delete.

The result: I spend maybe twenty minutes a day on social posting. Without AI, it would be two or three hours (if I did it at all, which, honestly, I often didn't). Without my editing pass, the posts would all start sounding identical and the signal of me would disappear.

You keep the AI leverage. You stay on the right side of platform trust. You don't lose your voice.

What AI does brilliantly

Let me be specific about where AI is actually a force multiplier, because vague "AI is helpful" claims are part of the slop problem.

In my own daily workflow, AI is genuinely brilliant at:

- Automation and logistics. Scheduling, cross-posting, formatting, resizing images, generating alt text.

- Helping me sharpen my own thoughts. I talk out a rough idea, AI asks clarifying questions, and by the end I actually know what I think. This use case surprised me most.

- Research and summarization. Reading twenty competitor pages and pulling patterns. Summarizing a 40-comment Reddit thread.

- Catching blind spots. "What's the strongest argument against this?" is one of my most-used prompts.

- Tone-matching across platforms. My Reddit voice is not my LinkedIn voice is not my blog voice. AI is unreasonably good at translating once you've shown it examples.

- Fixing my spelling. (Hi to my fellow non-native English speakers. You know.)

- Turning scrappy bullet points into readable paragraphs. I write in fragments. AI turns fragments into prose, and I edit back to something that sounds like me.

- Surfacing what I already believe but haven't articulated. Sometimes the AI's draft is wrong in exactly the way that makes me realize what the right version is.

Notice the pattern. In every case, AI is doing the mechanical or exploratory work. The judgment is still mine. The voice is still mine. The decision about what to publish is still mine.

What AI can't do

Now the other side.

AI cannot replace the human part of what we do. This isn't a platitude — it's a specific technical limitation with specific consequences.

AI doesn't have a soul. I'm not being mystical. I'm saying: it has no stake. It doesn't want anything. When I write about my pivot from HR to founding an AI company, that post has the weight of actual years of my actual life behind it. AI can produce something that reads like that post. It cannot produce a post that is that post.

AI makes mistakes pulling information from the wrong context, confidently. It will cite a study that doesn't exist. It will attribute a quote to the wrong person. It will confuse last year's product features with this year's. This is fine when you're brainstorming. It is disastrous when you're speaking publicly on behalf of your company.

AI doesn't have lived experience. The comment I wrote on Reddit last week that got a hundred upvotes — the one about a parent building an app with their nine-year-old — worked because I was also a parent bonding with my partner through a weird professional journey. AI could write a comment on that thread. It couldn't write that comment.

AI doesn't fully feel nuance. It can analyze nuance; it can't feel the floor tilt. In conversation, that difference shows up in about three exchanges.

AI doesn't have relationships. The founders I've talked to for an hour on Zoom, the users who have DMed me with feedback, the friends who sent me links while I was building — those are my relationships. AI cannot maintain them. It can help me keep track of them. That's different.

The 95/5 rule for founder engagement

If you take one practical thing from this post, take this: for any community-facing engagement as a founder, aim for 95% human and 5% assisted.

The assisted 5% is your drafting, your scheduling, your cross-posting, your logistics. The human 95% is the decision of what to publish, the final edit in your voice, the reply to the person who took the time to respond, the judgment call about whether this is worth saying at all.

The founders I see succeeding on social in 2026 are not the ones who automated the hardest. They're the ones who figured out where automation helps without betraying the reader.

Why your voice is more valuable now than it was last year

Here's the part I actually want people to hear:

The value of a real human voice is going up, not down, as AI content floods everything.

This is counterintuitive. The narrative for the past two years has been: AI will replace writers, AI will replace marketers, AI will replace founder-led content. And in one sense, that's true — you can now generate infinite mediocre content for free.

But that's exactly the point. When mediocre content is infinite, the scarce thing is not content. The scarce thing is content that actually means something. Content where you can feel a real person on the other end. Content that couldn't have been written by anyone else.

That's what you already have, as a founder. Your specific history. Your specific opinions. The weird mix of things only you care about. The callouses you earned building your product.

Use AI to remove friction. Don't use it to replace yourself. Because in a world that's going to keep getting flooded with generated content, the scarcest resource isn't reach.

It's a person who actually means what they say.

That's the bet Operum is built on — that human-in-the-loop beats autopilot, every time, because trust doesn't scale the way content does. And trust is the only thing that matters now.

Olga Primak is the founder of Operum, an AI agent orchestration platform for builders and founders.