The Complete Guide to AI Agent Orchestration in 2026

What is AI agent orchestration?

AI agent orchestration is the coordination of multiple specialized AI agents working together on a shared goal. Instead of one AI assistant doing everything, orchestration assigns distinct roles to purpose-built agents and manages how they hand off work, share context, and make decisions.

Think of it like a startup team. You do not hire one person to be the PM, architect, engineer, tester, marketer, and community manager. You hire specialists. Each one is better at their role than any generalist could be, and the value comes from how they coordinate — not from any single individual.

AI agent orchestration applies the same principle to AI. A PM agent triages and prioritizes. An Architect agent reviews technical decisions. An Engineer agent writes code. A Tester agent validates quality. A Marketing agent creates content. A Community agent handles support. Each agent has focused context, specialized prompts, and clear boundaries.

The orchestration layer is what makes them a team instead of six disconnected tools.

Why single-agent AI is not enough

Every major AI coding tool in 2026 — Codex, Claude Code, Cursor, Copilot, Devin, Windsurf, OpenClaw — follows the same model: one agent, one role, one task at a time.

These tools are genuinely impressive. Claude Code scores 80.8% on SWE-bench. Cursor's autocomplete feels telepathic. Copilot can generate entire PRs from issue descriptions.

But they all share the same limitation: they only accelerate coding.

If you are a developer at a large company with a PM, a QA team, and a marketing department, that is fine. Your AI tool makes you faster at your specific job.

If you are a startup founder, coding is 20-40% of your week. The rest is project management, architecture decisions, testing, marketing, community support, and the coordination overhead that connects all of it. No single-agent tool touches that other 60-80%.

This is not a criticism of these tools. It is a category observation. The market built seven variations of a coding assistant and left the rest of the product lifecycle completely unaddressed.

How orchestration works

AI agent orchestration has four core components:

1. Specialized agents

Each agent has a defined role, a focused context window, and clear boundaries on what it can and cannot do. Specialization matters because narrower context leads to more consistent, higher-quality output.

| Agent | Role | What it does |

|---|---|---|

| PM | Orchestrator | Triages issues, coordinates workflow, manages the pipeline, communicates with you |

| Architect | Technical advisor | Reviews feasibility, provides architectural guidance, catches design problems early |

| Engineer | Builder | Writes code, creates pull requests, implements features |

| Tester | Validator | Tests changes, catches bugs, approves quality before review |

| Marketing | Growth lead | Creates content, manages SEO, drives acquisition |

| Community | Support manager | Monitors Discord and social channels, handles user support |

Learn more about each agent on the Agents page.

2. Pipeline coordination

Agents do not talk to each other directly. That creates chaos — deadlocks, race conditions, conflicting actions. Instead, work flows through a structured pipeline with clear handoffs:

backlog → needs-architecture → ready-for-dev → in-progress → needs-testing → needs-review → doneEach stage has one owner. When an agent finishes, it updates the stage, which signals the next agent. The pipeline is the backbone of orchestration — it prevents agents from stepping on each other while keeping work moving forward.

See how the workflow pipeline operates in practice.

3. Shared knowledge base

This is the component that separates orchestration from simple task automation. Every agent reads from and writes to a shared knowledge base that contains:

- Architectural decisions and the reasoning behind them

- Coding conventions and project preferences

- Known issues, bug patterns, and fragile modules

- Deployment procedures and release checklists

- Team preferences and communication style

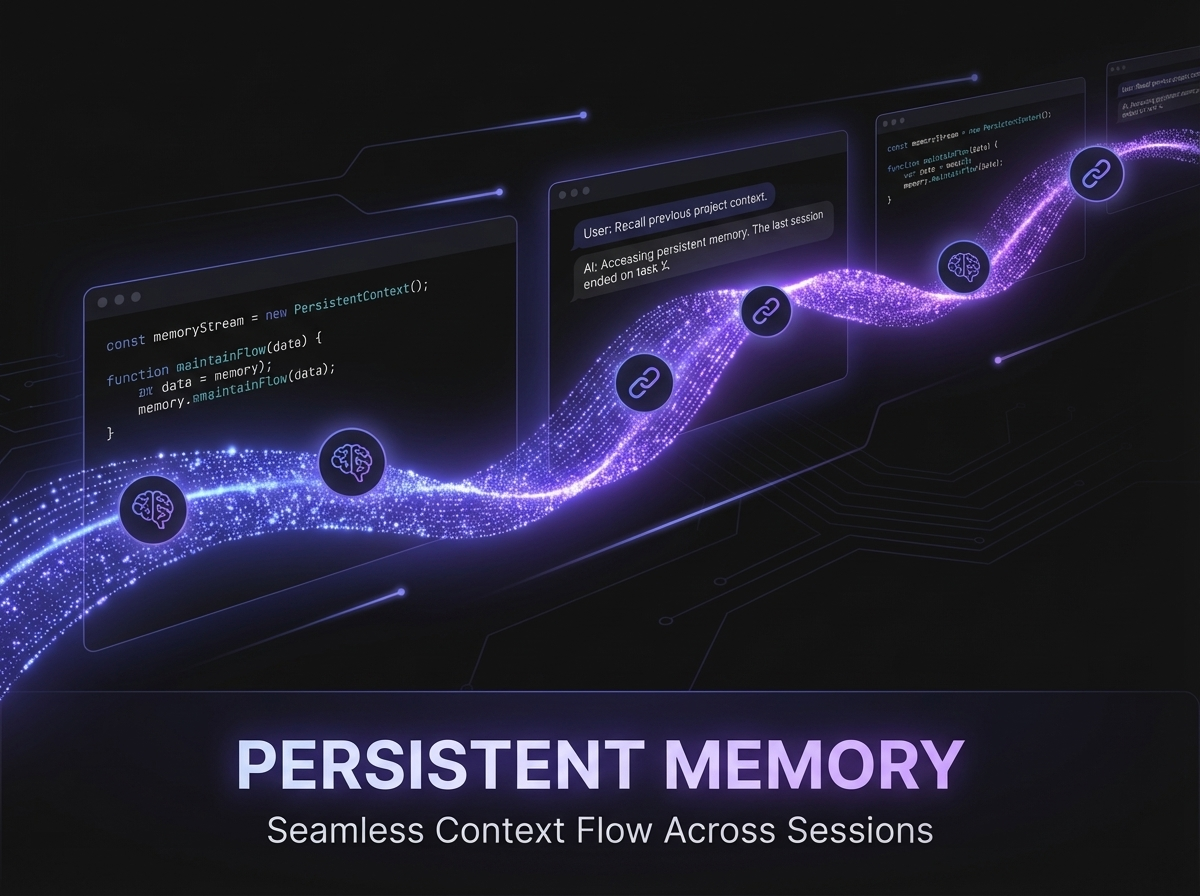

When the Engineer creates a PR, the Tester already knows the architecture. When the Architect reviews a design, they know what was tried before and why it was rejected. Context compounds over time — the agents never lose context between sessions.

4. Human-in-the-loop

Orchestration does not mean full autonomy. Nothing ships without human approval. The orchestrator presents decisions, the human approves or redirects. You review every PR, approve every merge, and make every strategic call.

The value is not replacing your judgment. It is eliminating the coordination overhead that prevents you from exercising it effectively.

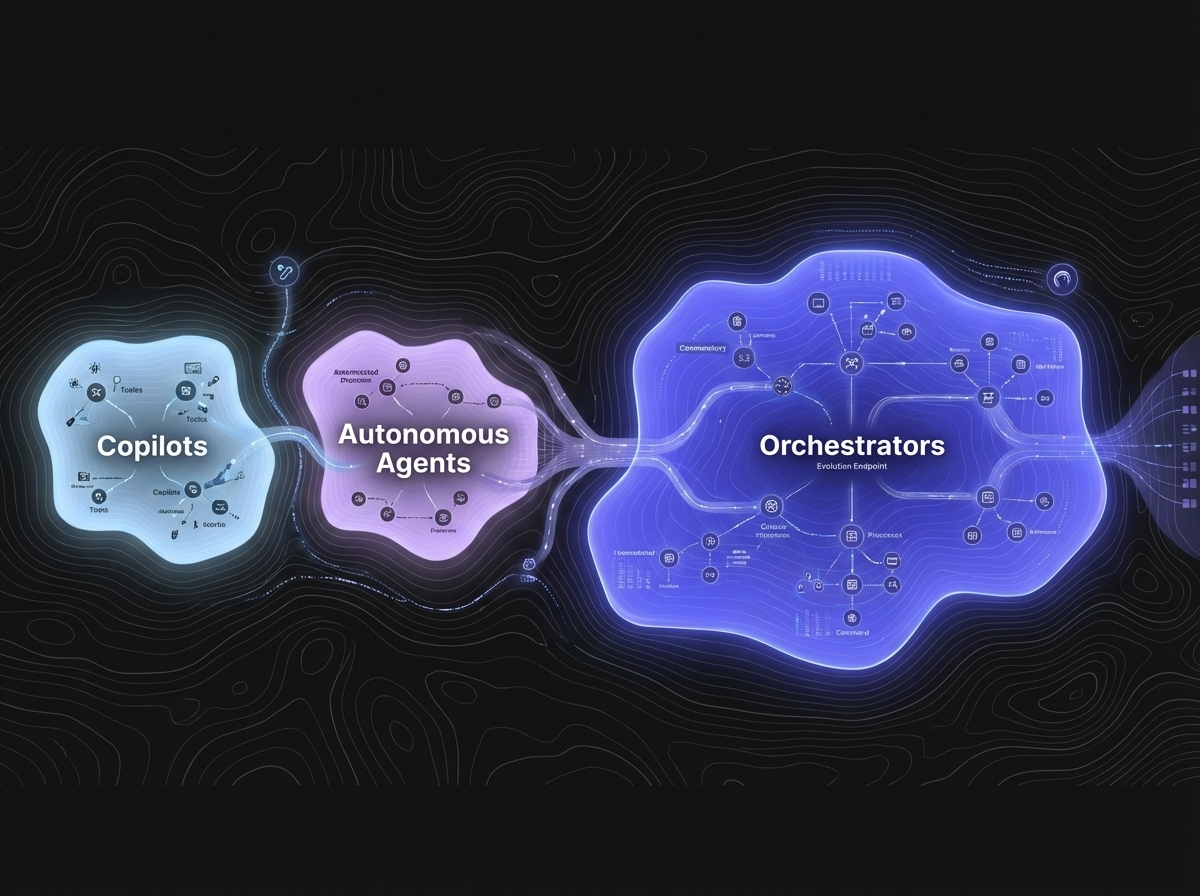

Orchestration vs. automation vs. agents

These terms get conflated. Here is how they differ:

| Concept | What it means | Example |

|---|---|---|

| Automation | Scripted, deterministic workflows | CI/CD pipeline runs tests on every push |

| AI Agent | An AI that can take actions autonomously within constraints | Copilot generates a PR from an issue |

| Orchestration | Coordination of multiple agents through a shared pipeline with human oversight | 6 agents coordinate to take an issue from backlog to deployed code |

Automation is rigid — it follows predefined rules. A single agent is flexible but isolated — it does not know what other agents are doing. Orchestration combines flexibility with coordination — multiple agents work together on the same goal, sharing context and handing off work through structured stages.

What to look for in an orchestration platform

If you are evaluating AI agent orchestration tools, here are the questions that matter:

Does it support specialized agents?

A platform that runs multiple copies of the same agent is parallelization, not orchestration. True orchestration assigns different roles with different capabilities, different context, and different tools.

Is context persistent and shared?

Agents that forget everything between sessions are interns, not teammates. Look for structured, persistent knowledge that compounds over time and is shared across all agents. This is the difference between a tool that works on day one and a team that gets better every month.

Is the pipeline transparent?

Can you see what every agent is doing? Can you audit who did what and when? If the orchestration is a black box, you cannot trust it with real work. The best orchestration platforms use your existing tools (like GitHub) as the coordination layer, so every action is visible in your normal workflow.

Is it local-first?

Where does your code go? If orchestration requires sending your codebase to a cloud service, that is a non-starter for many teams. Local-first orchestration means your code stays on your machine, your secrets stay in your keychain, and the AI processes run where you control them.

Can you swap AI engines?

The AI landscape changes fast. A platform locked to one provider becomes a liability when that provider changes pricing, quality, or terms. Look for pluggable engines — the ability to run different AI models for different agents without changing your workflows.

Is there human-in-the-loop by default?

Full autonomy sounds exciting until an agent makes a costly mistake. The right default is human approval for every meaningful action, with the option to increase autonomy as trust builds.

Use cases for AI agent orchestration

Solo founders and solopreneurs

You are the PM, the architect, the engineer, the tester, the marketer, and the support team. Orchestration gives you back the other five roles. You focus on the creative, strategic work. The agents handle coordination, implementation, testing, content, and support.

See how Operum works for individuals →

Small development teams (2-5 people)

Your team is talented but stretched. Orchestration fills the gaps — QA that does not get skipped, marketing that does not wait, support that does not lag. The agents extend your team's capacity without extending your headcount.

Enterprise engineering organizations

Orchestration at enterprise scale means pluggable AI engines, local inference for data sovereignty, full audit trails for compliance, and integration with existing tools. The orchestration layer sits above your AI tools and below your processes — adapting to your constraints, not the other way around.

See how Operum works for organizations →

How Operum implements orchestration

Operum is a desktop application that orchestrates 6 specialized AI agents. Here is what makes it different:

Local-first architecture. Built with Tauri (Rust backend, SvelteKit frontend). Your code stays on your machine. Agents run locally. Secrets stay in your OS keychain.

GitHub as the coordination layer. Every agent action shows up as a GitHub comment, label change, or commit. You can see the entire pipeline in your existing GitHub workflow. No separate dashboard to check. (Deep-dive on GitHub coordination →)

Persistent shared knowledge. All 6 agents read from and write to a structured knowledge base. Context compounds across sessions. The agents that work on your project in month three know exponentially more than they did on day one.

Bring Your Own Engine (BYOE). Currently runs on Claude Code Max. More engines coming soon — including local models via Ollama for teams that need zero data egress.

Human-in-the-loop by default. Nothing ships without your approval. You can increase autonomy with Turbo Mode and Auto-Merge when you are ready.

Getting started

- Download Operum — available on macOS, Windows, and Linux

- Connect your AI engine — currently Claude Code Max ($100+/month from Anthropic)

- Connect your GitHub repo — agents coordinate through your existing issues and PRs

- Create your first issue — describe what you want built, and watch 6 agents coordinate to deliver it

The free trial includes all 6 agents, 1 project, and 14 days to see orchestration in action. No credit card required.

View pricing → | See how it works → | Quick start guide →

Frequently asked questions

Do I need to configure each agent separately? No. Agents come pre-configured with sensible defaults. You can customize their behavior, AI model, and communication style from the Settings page.

Can I use Operum without GitHub? For content, marketing, and community workflows — yes. For code-related tasks, GitHub integration is recommended. More issue trackers (Jira, Linear) are on the roadmap.

What happens if an agent makes a mistake? Nothing ships without your approval. You review every PR before merging. If an agent produces low-quality work, you reject it and provide feedback — the agent learns from the shared knowledge base.

Is my code sent to the cloud? Operum runs locally. When agents process tasks, code context is sent to your AI provider (e.g., Anthropic) for processing. Operum itself does not store your code on any server. For full data sovereignty, local inference support is coming soon.

How is this different from just using Claude Code? Claude Code is an excellent engineering tool. Operum orchestrates Claude Code (and future engines) as part of a 6-agent team that covers PM, architecture, engineering, testing, marketing, and community — the full product lifecycle, not just coding.

Related Posts

- Building an AI Agent Orchestrator: How 6 Agents Coordinate Through GitHub — The technical deep-dive on pipeline architecture and coordination patterns.

- Why "Never Loses Context" Changes Everything — How persistent memory turns AI agents from daily interns into experienced teammates.

- The AI Tools Landscape in 2026 — Seven tools, one gap — and why orchestration is the missing layer.

- Why Your Enterprise AI Strategy Needs an Orchestration Layer — Pluggable engines, local inference, and audit trails for enterprise adoption.

Aleksandr Primak is the founder of Operum, a multi-agent AI team for builders and founders.